Meten is Weten: How Installing Plausible on My Hugo Blog Led to a Three-Node BGP ECMP Varnish DaemonSet

Cover: after Piet Mondrian, Composition with Red, Yellow, Blue — three nodes joined by a black grid — network topology as De Stijl.

It started with a single, innocent question: does anyone actually read this?

I’d been running this Hugo blog for a while, writing posts about the homelab, the cluster, the occasionally catastrophic self-inflicted incidents. At some point the thought surfaced that it would be nice to know whether the words were reaching anyone beyond me and the search indexer. So I installed Plausible — privacy-respecting analytics, no cookies, one config line in Hugo — and moved on.

What I did not expect was that this one small decision would, over the following weeks, pull me into a rabbit hole that ended with a Varnish DaemonSet sitting on three Kubernetes nodes with BGP ECMP distributing traffic across them, a set of k6 load tests with embarrassingly wrong methodology, a small field trip to an Orange Pi 6 Plus running Varnish in a container, and a sheepish discovery about my images that any junior front-end developer would have caught in five minutes.

April 2026 — on how curiosity about a hit counter turned into a distributed caching project

The Starting Point: A Hugo Blog on a Three-Node Kubernetes Cluster

To understand why the story spirals the way it does, you need to know the terrain.

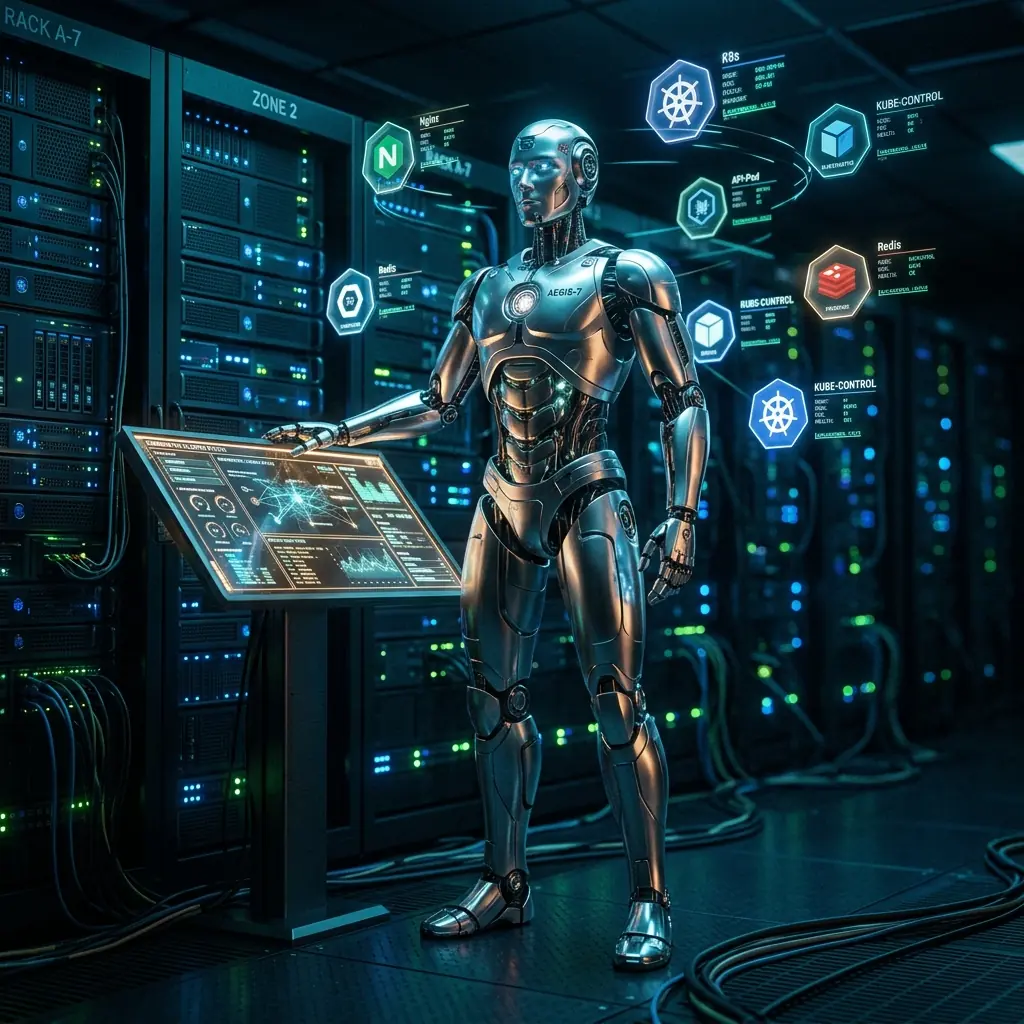

The blog runs as a Hugo static site, built by a GitLab CI pipeline and deployed as a container into a three-node Kubernetes cluster. The cluster sits behind a Mikrotik router that speaks BGP ECMP to the nodes: traffic arriving at the single external IP gets load-balanced across all three nodes at the routing level, before it ever touches Kubernetes.

| Node | NIC Speed | Notes |

|---|---|---|

| node02 | 10 Gbps | Main compute node, AMD GPU, 64GB RAM |

| storage1 | 1 Gbps | NAS/control-plane node |

| orange-pi-max-1 | 1 Gbps | ARM64 edge node |

This setup is deliberate. Mikrotik’s ECMP BGP (Equal-Cost Multi-Path) distributes connections at the hash level, spreading the load across all three nodes. For a blog, this is obviously overkill. For a blog operator who happens to enjoy infrastructure, it is simply Tuesday.

Each node runs an nginx ingress controller pod. The nginx pod on a given node receives traffic, terminates TLS, and proxies to the backend. With Hugo serving small, static HTML pages, latency was low and nobody was complaining. Plausible was showing that actual humans were reading the posts. Life was good.

Then I started looking at numbers.

The First Temptation: What’s the Ceiling?

Knowing that people read your blog is one thing. Knowing how fast they read it — or more precisely, how fast the server is serving it — is something else. This is where the SRE instinct kicks in, and it is an instinct that does not stay idle for long.

Hugo serves pre-rendered HTML. There is no database query, no server-side rendering, no dynamic content. In theory, serving a Hugo page should be trivially fast. But “in theory” is not a metric. The Dutch have a saying: “meten is weten” — measuring is knowing. And I wasn’t measuring anything beyond traffic counts.

The natural improvement was obvious: a Varnish cache in front of Hugo. Varnish sits between nginx and the Hugo pods, absorbs repeated requests into memory, and serves them from RAM at near-wire speed. Hugo only sees the first request for any given URL; everything after that comes from Varnish’s malloc heap. For a static blog that essentially never changes between CI deployments, the cache hit rate should asymptote toward 100%.

Standing Up In-Cluster Varnish

Deploying Varnish into Kubernetes is straightforward if you avoid a few non-obvious traps.

The first is namespace. I initially put Varnish in a separate cache namespace to keep things tidy. This immediately created a cross-namespace routing problem: the nginx ingress on each node needed to reach the Varnish service, but the ExternalName service type creates a CNAME record in DNS — and nginx ingress’s Lua DNS resolver does not follow CNAME chains. It expects an A record. The workaround is a headless Service with a manual Endpoint pointing at the Varnish ClusterIP (which does resolve to an A record), but that felt inelegant. The real fix was simpler: move everything to the default namespace and eliminate the cross-namespace bridge entirely.

The second trap is Varnish’s startup behavior. Varnish resolves backend hostnames at VCL compile time, when the container starts — not lazily at request time. If the backend DNS name isn’t resolvable when varnishd launches, it refuses to start. This produces an error message that is accurate but cryptic if you’re expecting dynamic DNS:

backend hugo: cannot resolve host 'masterdjienocom.default.svc.cluster.local'

The fix is a small shell wrapper that polls DNS before handing off to varnishd:

until getent hosts masterdjienocom.default.svc.cluster.local > /dev/null 2>&1; do

echo "waiting for backend DNS..."; sleep 2

done

exec varnishd -F -f /etc/varnish/default.vcl -s malloc,256m -a 0.0.0.0:80

The VCL itself is straightforward: strip cookies (Hugo sets none), cache static assets for one hour with a 24-hour grace period, cache HTML pages for five minutes with a one-hour grace. Add X-Cache: HIT/MISS headers so you can verify the cache is working without digging into logs. This last part turns out to be crucial for load testing.

With Varnish in place and the nginx ingress backend pointing at varnish-djieno:http, the architecture was set. Hugo serves one request per URL per deployment. Everything else comes from memory.

Load Testing: Why You Need More Than One Kind

Here is where “meten is weten” gets genuinely interesting, because how you measure matters at least as much as whether you measure at all.

k6 is a load testing tool written in Go with a JavaScript scripting API. I include it in every project I work on that has an HTTP interface. The habit is not optional — it is how you discover that your service works at 10 users and falls over at 100, before 100 users actually show up.

But “a k6 test” is not one thing. Different test shapes answer different questions:

Smoke test (2-5 VUs, 1 minute): Does it even work? Does anything immediately break? This is the first test you run after any deployment.

Load test (expected concurrency, 5-15 minutes): Does it perform acceptably at normal expected load? This is the benchmark you establish and then protect.

Stress test (2-5x expected load): Where does performance degrade? Not necessarily “where does it break” — often the more interesting question is “where does the 95th percentile start climbing?”

Spike test (ramp from 0 to 10x in seconds): Does a sudden burst of traffic (a Hacker News frontpage hit, a newsletter link) cause cascading failures, or does the system absorb the spike and recover?

Soak test (normal load, hours): Does memory grow unbounded? Does garbage collection cause latency spikes after 2 hours? Does Varnish start evicting hot objects once the cache fills? This is where you catch the problems that smoke tests and load tests miss entirely.

TTFB-focused test: Measures time-to-first-byte specifically, isolating the server’s processing time from network transfer time. This is the right metric for a cache layer — Varnish either has the object or it doesn’t, and you want to know how fast that decision takes.

I will tell you right now that I ran the wrong test at least once, drew confident conclusions from it, and had to be corrected by my own metrics. We will get to that.

Round One: The Fake URL Problem

The first k6 test against the Varnish-backed Hugo cluster looked like this:

✓ checks.........................: 37.44%

✓ http_req_failed................: 62.56%

62.56% failure rate. With a 99.98% cache hit rate.

These two numbers are not contradictory, they are just measuring different things simultaneously. The failure rate was entirely explained by the URLs in the test script: I had invented blog post slugs that sounded plausible (/blog/mikrotik-bgp-lab/, /blog/kubernetes-storage-deep-dive/) but did not actually exist. Hugo returns a 404 for each of them. Varnish, doing its job correctly, caches those 404 responses and serves them rapidly on subsequent requests. The cache was operating perfectly. The test was simply hammering a set of URLs that didn’t exist.

The lesson is embarrassingly simple but easy to miss when you’re focused on infrastructure metrics: your load test is only as good as the URLs you feed it. For a blog, this means scraping the actual site for real paths before building the test:

curl -s https://djieno.com/blog/ | grep -oP 'href="/blog/[^"]*"' | sort -u

This gives you the real URL set. Every request in the test hits a page that actually exists, returns a 200, and exercises the cache properly.

Round Two: The Orange Pi Detour

With real URLs in hand, the next question was whether the in-cluster Varnish was actually providing value, and whether the cluster setup was better than a standalone Varnish node outside Kubernetes.

The Orange Pi 6 Plus was already running in the rack. The OP6+ is a 12-core ARM64 board drawing 15-25W, and it had spare capacity. The question was academic but irresistible: would a single Varnish instance on dedicated ARM hardware outperform the in-cluster setup?

I built the comparison properly, using the Orange Pi 5 Max as the k6 source — a neutral node on the same LAN segment, eliminating the question of test source location. The OP6+ ran Varnish 6.6 in an Incus LXC container, serving from its own MetalLB HTTP endpoint.

At 600 VUs, the results were close:

| Setup | Req/s | Median Latency | Notes |

|---|---|---|---|

| In-cluster Varnish (single Deployment) | 489 req/s | 28ms | All traffic via node02 |

| OPi6 standalone Varnish | 510 req/s | 14ms | Direct ARM serving |

The standalone node was marginally faster on median latency, almost certainly because it avoided TLS termination overhead from nginx. But the difference is well within measurement noise for a blog that serves pages to real users who perceive anything under 100ms as “instant.”

The more important architectural argument pointed the other way: resilience and topology. The in-cluster setup spans three nodes. The OP6+ is a single point of failure with a 1G NIC. And more relevantly, the cluster was already built with ECMP BGP. That bandwidth distribution only works if each node can serve traffic locally. Routing from three ingress nodes down to one cache node eliminates exactly the advantage that ECMP provides.

The ECMP Insight: DaemonSet Over Deployment

This led to the architectural realization that the original single-replica Varnish Deployment was undermining the cluster’s own design.

With one Varnish pod running on node02, the traffic flow looked like this:

External request → Mikrotik ECMP → node02's nginx → node02's Varnish ✓

External request → Mikrotik ECMP → storage1's nginx → node02's Varnish ✗ (cross-node hop)

External request → Mikrotik ECMP → opi-max's nginx → node02's Varnish ✗ (cross-node hop)

ECMP was distributing inbound connections across all three nodes. But then two out of three paths immediately forwarded to node02 to reach the single Varnish pod, nullifying the bandwidth distribution. node02’s 10G NIC was absorbing essentially all cache traffic regardless of which node received the original connection.

The fix is a DaemonSet: one Varnish pod per node. Combined with internalTrafficPolicy: Local on the Service — a Kubernetes 1.26+ feature that routes Service traffic exclusively to pods on the same node as the caller — the flow becomes:

External request → Mikrotik ECMP → node02's nginx → node02's Varnish ✓

External request → Mikrotik ECMP → storage1's nginx → storage1's Varnish ✓

External request → Mikrotik ECMP → opi-max's nginx → opi-max's Varnish ✓

Each node has its own warm cache. Each nginx pod hits its co-located Varnish pod with zero cross-node network hops. The ECMP bandwidth distribution is fully utilized. Node02’s 10G NIC handles one third of traffic instead of all of it.

One gotcha: storage1 is the control-plane node, and it was running at 98% CPU requests — close enough that the 50m CPU request on the Varnish pod couldn’t be scheduled. Reducing the request to 10m resolved it. Varnish serving objects from a malloc heap is essentially free in CPU terms; the reservation was conservative and unnecessary.

The DaemonSet conversion also cleaned up the architecture. Moving all Varnish resources into the default namespace eliminated the namespace bridge, the ExternalName service, and the manual Endpoint workaround entirely.

Round Three: The “ResponseType: None” Confusion

With three Varnish pods running, the natural question was whether the DaemonSet actually improved throughput. The k6 test from OPi5 Max gave a definitive answer:

http_reqs......................: 47433 226 req/s

http_req_waiting...............: avg=303ms med=24.77ms

http_req_receiving.............: avg=999ms med=185ms

data_received..................: 1.6 GB 7.5 MB/s

226 req/s. My prediction before the test had been 1000-1200 req/s. I was wrong by a factor of five.

The temptation was to conclude the DaemonSet had introduced a regression. But the http_req_waiting median of 24.77ms told the real story: Varnish was responding in 25 milliseconds. The server’s work was done in 25ms. The remaining ~1 second in each iteration was network transfer time for the HTML body.

I attempted to fix this by adding responseType: 'none' to the k6 script, expecting it to skip the body download. This is a common k6 pattern for measuring TTFB without being bottlenecked by body transfer. The result was identical: still 226 req/s, still 1.6 GB transferred.

The reason: responseType: 'none' in k6 does not skip the network transfer. It skips storing the body in the Go runtime’s memory. The data still traverses the TCP connection from server to client; k6 just discards the bytes as they arrive rather than buffering them. data_received still counts every byte. For measuring true server throughput independent of response body size, you need HTTP HEAD requests — or you need to measure http_req_waiting specifically, not http_req_duration.

The actual Varnish performance was right there in the numbers: 25ms median TTFB. That is the metric that matters for a cache layer. The 226 req/s figure was measuring “how fast can the OPi5 Max download 1.6GB of blog posts across 600 concurrent HTTPS connections”, which is a perfectly valid stress test of TCP connection handling but says nothing useful about Varnish.

Lesson: choose your metric before you run the test, not after. A throughput test and a TTFB test look identical in k6 script form but answer completely different questions. Running the wrong one and drawing confident conclusions from it is how you end up explaining to yourself why your DaemonSet performed “worse” than your single-pod Deployment.

The Thing I Had Completely Forgotten

At some point during this whole exercise — during the part where I was measuring cache hit rates and ECMP topology and TCP connection pools — I realized something embarrassing.

I had never converted the blog images to WebP.

Every post has a cover image. Some posts have diagrams, screenshots, photos of hardware. All of them were PNGs. Some of them were large PNGs — uncompressed, unoptimized, pulled from a camera or a screenshot tool and dropped directly into /static/img/. The kind of thing that makes a Lighthouse audit go red and a front-end engineer visibly wince.

And here is the dark comedy of it: I hadn’t noticed. Not because I don’t care about performance — I had just spent three weeks optimizing the entire serving stack down to the egress NIC — but because the network was too fast to notice. node02’s 10G NIC was serving a 4MB PNG in under 50 milliseconds to LAN clients. The site felt instant. The images loaded before your eye could parse that they were large.

The fast infrastructure had been quietly covering for a basic web optimization failure that I would have caught immediately if the serving latency were a hundred milliseconds higher. Speed is a great problem-hider.

The fix was automated: a CI pipeline step that runs cwebp on every image, generates the .webp alongside the original, and the Hugo template serves WebP to browsers that support it with a PNG fallback. A 4MB PNG becomes a 280KB WebP. The blog became measurably faster for users on real-world internet connections — not the 10G LAN connection I’d been testing from.

This is why you need real-user monitoring alongside synthetic load tests. Plausible tells me people are reading. It doesn’t tell me they waited three seconds for the cover image to load on their phone. That number only surfaces if you look for it specifically.

What the Observability Stack Is Missing

The honest accounting after this project is that the measurement infrastructure improved significantly, but it still has gaps.

What we have: X-Cache: HIT/MISS headers, Plausible analytics, k6 load tests in CI, Hugo pod replica counts, Flux reconciliation status.

What we don’t have:

Varnish metrics in Prometheus. Varnish exposes a rich statistics API via varnishstat. A varnish_exporter sidecar would publish these to Prometheus and make them queryable in Grafana: cache hit rate over time, object eviction rate, backend request latency distribution, thread pool saturation. Right now, if Varnish’s cache hit rate drops from 99% to 60% overnight because a deployment reset the cache, I won’t know until I manually curl the site and look at the header. I haven’t wired Varnish metrics into Prometheus yet because manual checks were acceptable while focusing on the core caching architecture.

Structured logs in Loki. Varnish can emit access logs in any format vlogd supports. Forwarding these to Loki means you can write LogQL queries like “show me all requests that were cache misses and took more than 200ms to serve” — which immediately identifies pages that are either uncached or have slow Hugo backends. Currently, those logs live in the container and are gone when the pod rotates.

Distributed traces in Tempo via OpenTelemetry. This is where the picture becomes genuinely useful. A trace that spans the full request path — nginx → Varnish → Hugo — would show you exactly where time is being spent on a cache miss. Is it the TLS handshake in nginx? The VCL processing in Varnish? The Hugo template rendering? OTEL instrumented at each layer, with traces collected in Tempo, would answer that question for every request instead of making you guess based on aggregate metrics.

The irony is that the Kubernetes cluster already runs a full observability stack — Prometheus, Loki, and Tempo are all running in the cluster. The gap is that Varnish and nginx are not instrumented to use them. Adding varnish_exporter as a DaemonSet sidecar and configuring Varnish’s VSL log format to emit something Promtail can parse would close most of the gap without any new infrastructure.

The k6 tests already generate traces via the k6 xk6-output-opentelemetry extension if you configure it. Correlating load test runs with the observability data — “run k6 at 600 VUs, watch Varnish thread pool saturation in Grafana, see request latency distributions in Tempo” — would turn these tests from point-in-time snapshots into a continuous improvement loop.

What Actually Changed

From the first Varnish pod to the DaemonSet, the architecture shifted in three meaningful ways:

Cache locality. Each node now has its own Varnish pod and its own warm cache. A request arriving at storage1 is served from storage1’s memory, not from node02’s memory over a 1G network link. For the blog’s small URL set (~20 pages), each pod’s cache warms to >99% hit rate within the first few hundred requests after a Varnish restart.

ECMP utilization. The BGP ECMP setup now actually distributes both inbound traffic and cache serving across all three nodes. Previously it distributed inbound traffic and then funneled cache serving back to one node, which negated half the point of ECMP.

Hugo isolation. Hugo pods see an order of magnitude fewer requests. In a 3m30s load test, the Hugo pods collectively processed about 27 requests — one cold miss per URL per Varnish pod, plus the occasional TTL expiry. Everything else — tens of thousands of requests — was served from memory without Hugo being involved at all.

The numbers that matter:

| Metric | Before | After |

|---|---|---|

| Varnish pods | 1 (Deployment) | 3 (DaemonSet) |

| Cross-node hops for cached requests | 2/3 of all requests | 0 |

| TTFB (median) | 28ms | 25ms |

| Cache hit rate | >99% | >99% (per node) |

| Hugo requests during load test | ~27 | ~27 (distributed across 3 pods) |

The Recurring Theme

Every step of this project produced a measurement that contradicted the previous assumption.

Plausible showed people were reading, so I cared about performance. Varnish showed near-perfect cache hit rates, so I thought the serving was optimal. k6 with fake URLs showed 62% failure rate, which looked catastrophic until the URL set was fixed. The OPi6 comparison showed similar performance to in-cluster, which looked like “no benefit” until the ECMP topology argument reframed it. The DaemonSet test showed 226 req/s, which looked like a regression until http_req_waiting showed 25ms TTFB, which revealed the actual bottleneck was body transfer, not Varnish.

And underneath all of it, invisible because the network was fast enough to hide it: unoptimized PNG images on a blog post about infrastructure performance.

“Meten is weten” — measuring is knowing — is not just a reminder to run tests. It’s a reminder that the framing of the measurement determines what you know. Change the metric and you change the conclusion. The load test is only as useful as your understanding of what it’s actually measuring.

The blog is faster now, the architecture is better, and the k6 tests live in CI. The next step is wiring Varnish’s metrics into Prometheus and its logs into Loki, so that “meten is weten” stops being a point-in-time exercise and becomes an always-on property of the system.

And next time, I will run Lighthouse on the images before building a distributed cache in front of them.

References

- The AI That Monitored Your Cluster Just Brought It Down — the incident that started the architecture review

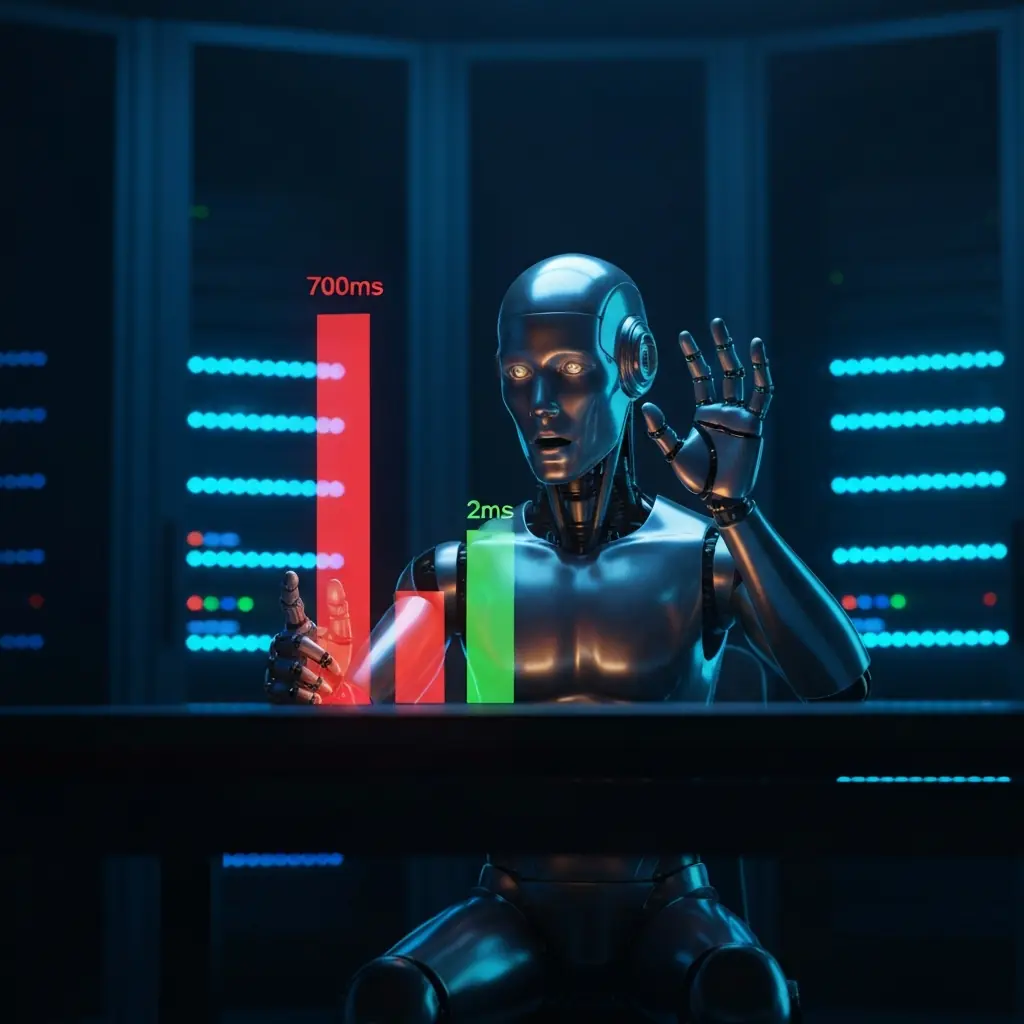

- 700ms to 2ms: What a Cluster Fire Taught Me About Embedding — why measurement context matters for latency numbers

- Ending the commit storm: validating FluxCD manifests locally — the GitOps tooling behind the deployments

- k6 load testing documentation — specifically the section on HTTP request types and what

responseType: 'none'actually does - Varnish VCL reference — varnish-cache.org

- internalTrafficPolicy — Kubernetes docs