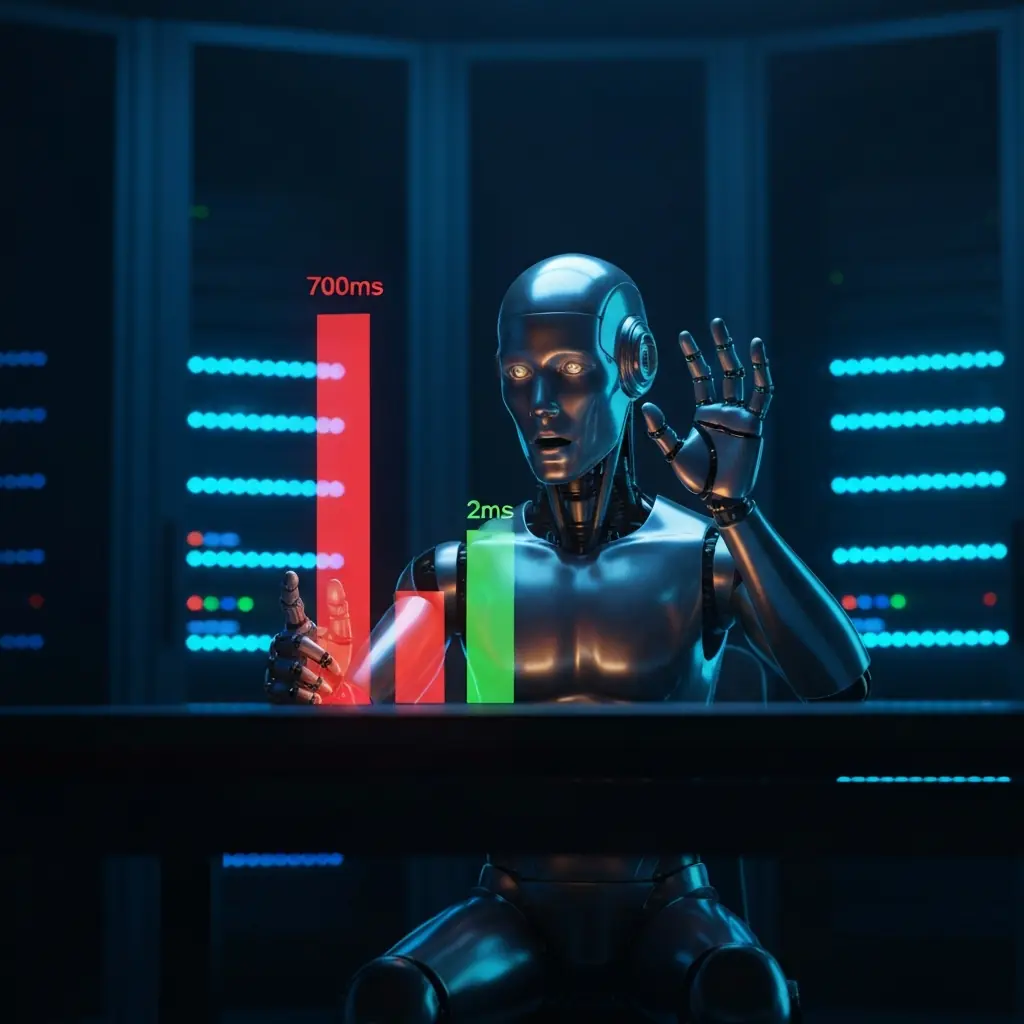

700ms to 2ms: What a Cluster Fire Taught Me About Embedding

Cover: after Wassily Kandinsky, Composition VIII — the 700ms→2ms epiphany as pure visual music — a cyan circle radiating into smaller satellites.

700ms. That was the number that haunted my Kubernetes cluster, slowly burning it to the ground. Every alert the cluster generated, every log line it processed for AI-driven feedback, triggered an embedding operation. Each of these embeddings, we thought, took 700ms, saturating a CPU core, which in turn triggered more alerts, creating a truly spectacular, self-immolating feedback loop. The load average climbed to 153. It was a cluster fire, quite literally. Then, in the chaotic aftermath of patching the inferno and moving that “expensive” embedding workload to a humble 15-watt ARM board, something remarkable emerged: the warm latency was a mere 2ms. Even a cold start, including model loading, clocked in at around 100ms. The sobering discovery? The 700ms was never about the embedding operation itself. It was the embedding struggling under full CPU saturation, choked for headroom. On a quiet, dedicated machine with a warm model, the exact same task takes 2 milliseconds.

April 2026 — benchmarking the operation everyone said was expensive

Why Embedding Was “Expensive”

For months, I treated embedding as a heavy, expensive operation. I had observed the system taking 24 to 30 seconds per call and naturally assumed the model was just slow. The reality was far more embarrassing: I was benchmarking a workload on a machine that was already on fire. My Kubernetes node was hitting a load average of 153 on 16 cores. When you are that saturated, you aren’t measuring computation; you are measuring contention.

The embedding process wasn’t slow; it was just waiting in a massive queue for a sliver of CPU time. This is the danger of observing a system under duress—latency spikes look like architectural bottlenecks when they are actually just the sound of a CPU screaming for help. In reality, the actual math of embedding on modern silicon is incredibly fast. The model is small and the operations are mostly matrix multiplications, which modern ARM cores handle in hardware via i8mm. I wasn’t fighting a slow model; I was fighting a scheduler. It was a simple, brutal truth.

What Embedding Actually Is

At its core, embedding is just the process of converting a piece of text into a list of numbers, called a vector, that encodes semantic meaning. Think of it as a coordinate in a high-dimensional map. If two pieces of text have similar meanings—like “KubePodCrashLooping” and “pod restarting”—their vectors end up close together in that space. If you embed “pizza recipe,” it ends up in a completely different neighborhood. This allows for semantic search: instead of hunting for exact keywords, the system just measures the distance between vectors.

The model I use, nomic-embed-text, is a tiny 81MB. I use a Q4 quantized version, which means the weights are compressed to 4-bit integers to make the model smaller and faster. For this use case, the quality loss is negligible, but the efficiency gain is huge. I haven’t benchmarked other quantization levels yet because Q4 is proving perfectly adequate for these semantic search needs. Regardless of whether the internal math uses F32 or Q4, the output is always the same: 768 floating-point numbers that get stored in Qdrant for later retrieval.

The 2ms Discovery

I spent a fair amount of time benchmarking three different configurations, expecting a custom-compiled version of llama.cpp with SVE2 and i8mm instructions to be the clear winner. I tested generic ARM64 Ollama, the specialized SVE2 build, and the Ollama reference.

The results were a bit of a letdown: they all landed between 2ms and 3ms once warm. The SVE2 build was technically 33% faster than the generic one, but a 1ms difference is completely irrelevant when you’re operating at these speeds.

| State | Latency | Note |

|---|---|---|

| Saturated Cluster | 700ms | Measuring CPU contention, not computation |

| Cold Start | ~100ms | Loading 81MB model from disk to RAM |

| Warm (Generic) | 2–3ms | Hardware-accelerated matrix multiplication |

The real epiphany came when I compared this to the 700ms latency that had been burning my cluster. The same model, the same math, but 350 times faster simply because the CPU had headroom. There is a “cold start” where the 81MB model loads from disk, taking about 100ms, but since the model fits entirely in RAM and the OS keeps it in the page cache, every subsequent call is “warm.” For a service doing hundreds of embeddings an hour, you are effectively always warm.

Running It Locally

The final stack is refreshingly simple. I’m running Ollama on ARM64 via a single binary install on an Orange Pi 6 Plus. It exposes a standard HTTP API, so the transition was trivial: I just updated the OLLAMA_HOST environment variable in my brain-ingest CronJobs to point to http://10.1.1.25:8000. The board costs about €150, sips 15-25W of power, and handles the embedding workload without breaking a sweat.

For storage, I use Qdrant, a highly efficient Rust binary that runs anywhere. When you compare this to using OpenAI’s text-embedding-ada-002, the local approach wins on every front. Not only do I avoid the cost per thousand tokens and the inevitable network latency, but I also stop sending my cluster’s internal incident data to a third party. It turns out that running a dedicated, low-power ARM board is faster, cheaper, and infinitely more private than an API call.

Fast Embeddings Change the Architecture

When I talk about embedding latency, the difference between 700ms and 2ms isn’t just a number; it’s a paradigm shift in how you architect systems. At 700ms, you design around the slowness. You batch requests, cache aggressively, and meticulously avoid embedding operations in the real-time request path. It’s a bottleneck, a chokepoint you have to manage.

But at 2ms? You stop designing around it entirely. You embed inline, in real-time, for everything. Every alert, every log event, every deployment event gets embedded at ingestion without a second thought, because the operation is effectively instantaneous. The architecture simplifies dramatically. You no longer need complex queues to protect a slow backend; the backend isn’t slow anymore.

The practical consequence is profound: your RAG index stays current. If an incident starts at 14:00 and I’m querying for similar events at 14:05, those five minutes of alerts are already in the index, already searchable. This is how real-time semantic memory becomes practical. Compare that to a common alternative: a batch embedding job running hourly means your most recent 59 minutes of critical context are simply missing when you need them most, leaving you blind in a crisis.

The RAG Ops Use Case

This shift in embedding speed unlocks concrete, immediately impactful applications in operations. When a pod starts crash-looping at 2 AM, I don’t want an AI that guesses based on general knowledge; I want an AI that searches my specific operational history. Imagine a system that tells me: “The last time this pod crash-looped, it was because of a misconfigured secret that was rotated at 01:47. Here is the alert that preceded it. Here is the fix that resolved it.”

That level of grounded, actionable insight is only possible if every alert, every event, every piece of my operational history is embedded and queryable. This is where functions like assess_risk() come in. Before any significant write operation in the cluster, the system queries the Qdrant index for similar past incidents. It returns a risk_score and a recommendation, providing context from my actual cluster history.

In tools like LibreChat, when the AI agent proposes an action, I see the evidence: the proposed action, the risk score, and the three most similar past events. My approval is grounded in retrieved facts, not a black box. This is the fundamental difference between AI-assisted operations and truly AI-managed operations – and it hinges entirely on having a fast, always-current, local embedding pipeline. At 700ms, you simply can’t keep that index current in real-time. At 2ms, you can.

Why This Matters Beyond the Homelab

This isn’t just a curiosity for my homelab. Local embedding at 2ms on a 15-watt ARM board, right on the network edge, fundamentally challenges the assumption that semantic search demands hyperscale cloud infrastructure. The embedding model itself is a compact 81MB. The hardware requirement is surprisingly modest, and the efficiency of modern quantized models makes the “cloud-only” argument for RAG increasingly obsolete.

The real lesson here isn’t a theological debate between ARM and x86, or a product pitch for Ollama versus some managed embedding service. It’s far more fundamental: the seemingly immutable constraints we often accept as gospel — “embedding is inherently slow,” “RAG absolutely demands the cloud,” “local AI is, at best, a charming toy” — were never absolute limits carved in stone. They were merely symptoms, artifacts of a specific setup and an unquestioned architecture. The very moment you lift that workload out of a system already gasping for air at 100% capacity and give it room to breathe, the entire landscape of possibility shifts. This isn’t theoretical; it’s running in production today, on a board that costs less than a decent dinner, drawing 15 watts, and delivering embeddings at a consistent 2ms. It is not a toy.

References: